简单介绍下mcp是什么

在大模型的演进过程中,mcp是个对于使用者非常有用的一个协议或者说工具,Model Context Protocol (MCP) 是一种专为大型语言模型和 AI 系统设计的通信协议框架,它解决了 AI 交互中的一个核心问题:如何有效地管理、传递和控制上下文信息。打个比方,如果把 AI 模型比作一个智能助手,那么 MCP 就是确保这个助手能够”记住”之前的对话、理解当前问题的背景,并按照特定规则进行回应的通信机制。

MCP 的工作原理

基本结构

MCP 的基本结构可以分为三个主要部分:

- 上下文容器 (Context Container):存储对话历史、系统指令和用户背景等信息

- 控制参数 (Control Parameters):调节模型行为的设置,如温度、最大输出长度等

- 消息体 (Message Body):当前需要处理的输入内容

┌─────────────────────────────────┐

│ MCP 请求/响应结构 │

├─────────────────────────────────┤

│ │

│ ┌─────────────────────────┐ │

│ │ 上下文容器 │ │

│ │ ┌─────────────────┐ │ │

│ │ │ 对话历史 │ │ │

│ │ └─────────────────┘ │ │

│ │ ┌─────────────────┐ │ │

│ │ │ 系统指令 │ │ │

│ │ └─────────────────┘ │ │

│ │ ┌─────────────────┐ │ │

│ │ │ 用户背景 │ │ │

│ │ └─────────────────┘ │ │

│ └─────────────────────────┘ │

│ │

│ ┌─────────────────────────┐ │

│ │ 控制参数 │ │

│ └─────────────────────────┘ │

│ │

│ ┌─────────────────────────┐ │

│ │ 消息体 │ │

│ └─────────────────────────┘ │

│ │

└─────────────────────────────────┘

实操

首先基于start.spring.io创建一个springboot应用,需要springboot的版本3.3+和jdk17+

然后添加maven依赖1

2

3

4

5

6

7

8

9

10<dependency>

<groupId>org.springframework.ai</groupId>

<artifactId>spring-ai-starter-mcp-server</artifactId>

<version>1.0.0-M8</version>

</dependency>

<dependency>

<groupId>org.springframework</groupId>

<artifactId>spring-web</artifactId>

<version>6.1.12</version>

</dependency>

我们就可以实现一个mcp的demo server1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99package com.nicksxs.mcp_demo;

import com.jayway.jsonpath.JsonPath;

import org.springframework.ai.tool.annotation.Tool;

import org.springframework.ai.tool.annotation.ToolParam;

import org.springframework.stereotype.Service;

import org.springframework.web.client.RestClient;

import java.util.List;

import java.util.Map;

public class WeatherService {

private final RestClient restClient;

public WeatherService() {

this.restClient = RestClient.builder()

.baseUrl("https://api.weather.gov")

.defaultHeader("Accept", "application/geo+json")

.defaultHeader("User-Agent", "WeatherApiClient/1.0 (your@email.com)")

.build();

}

public String getWeatherForecastByLocation(

double latitude, // Latitude coordinate

double longitude // Longitude coordinate

) {

// 首先获取点位信息

String pointsResponse = restClient.get()

.uri("/points/{lat},{lon}", latitude, longitude)

.retrieve()

.body(String.class);

// 从点位响应中提取预报URL

String forecastUrl = JsonPath.read(pointsResponse, "$.properties.forecast");

// 获取天气预报

String forecast = restClient.get()

.uri(forecastUrl)

.retrieve()

.body(String.class);

// 从预报中提取第一个周期的详细信息

String detailedForecast = JsonPath.read(forecast, "$.properties.periods[0].detailedForecast");

String temperature = JsonPath.read(forecast, "$.properties.periods[0].temperature").toString();

String temperatureUnit = JsonPath.read(forecast, "$.properties.periods[0].temperatureUnit");

String windSpeed = JsonPath.read(forecast, "$.properties.periods[0].windSpeed");

String windDirection = JsonPath.read(forecast, "$.properties.periods[0].windDirection");

// 构建返回信息

return String.format("Temperature: %s°%s\nWind: %s %s\nForecast: %s",

temperature, temperatureUnit, windSpeed, windDirection, detailedForecast);

// Returns detailed forecast including:

// - Temperature and unit

// - Wind speed and direction

// - Detailed forecast description

}

public String getAlerts( String state) {

// 获取指定州的天气警报

String alertsResponse = restClient.get()

.uri("/alerts/active/area/{state}", state)

.retrieve()

.body(String.class);

// 检查是否有警报

List<Map<String, Object>> features = JsonPath.read(alertsResponse, "$.features");

if (features.isEmpty()) {

return "当前没有活动警报。";

}

// 构建警报信息

StringBuilder alertInfo = new StringBuilder();

for (Map<String, Object> feature : features) {

String event = JsonPath.read(feature, "$.properties.event");

String area = JsonPath.read(feature, "$.properties.areaDesc");

String severity = JsonPath.read(feature, "$.properties.severity");

String description = JsonPath.read(feature, "$.properties.description");

String instruction = JsonPath.read(feature, "$.properties.instruction");

alertInfo.append("警报类型: ").append(event).append("\n");

alertInfo.append("影响区域: ").append(area).append("\n");

alertInfo.append("严重程度: ").append(severity).append("\n");

alertInfo.append("描述: ").append(description).append("\n");

if (instruction != null) {

alertInfo.append("安全指示: ").append(instruction).append("\n");

}

alertInfo.append("\n---\n\n");

}

return alertInfo.toString();

}

// ......

}

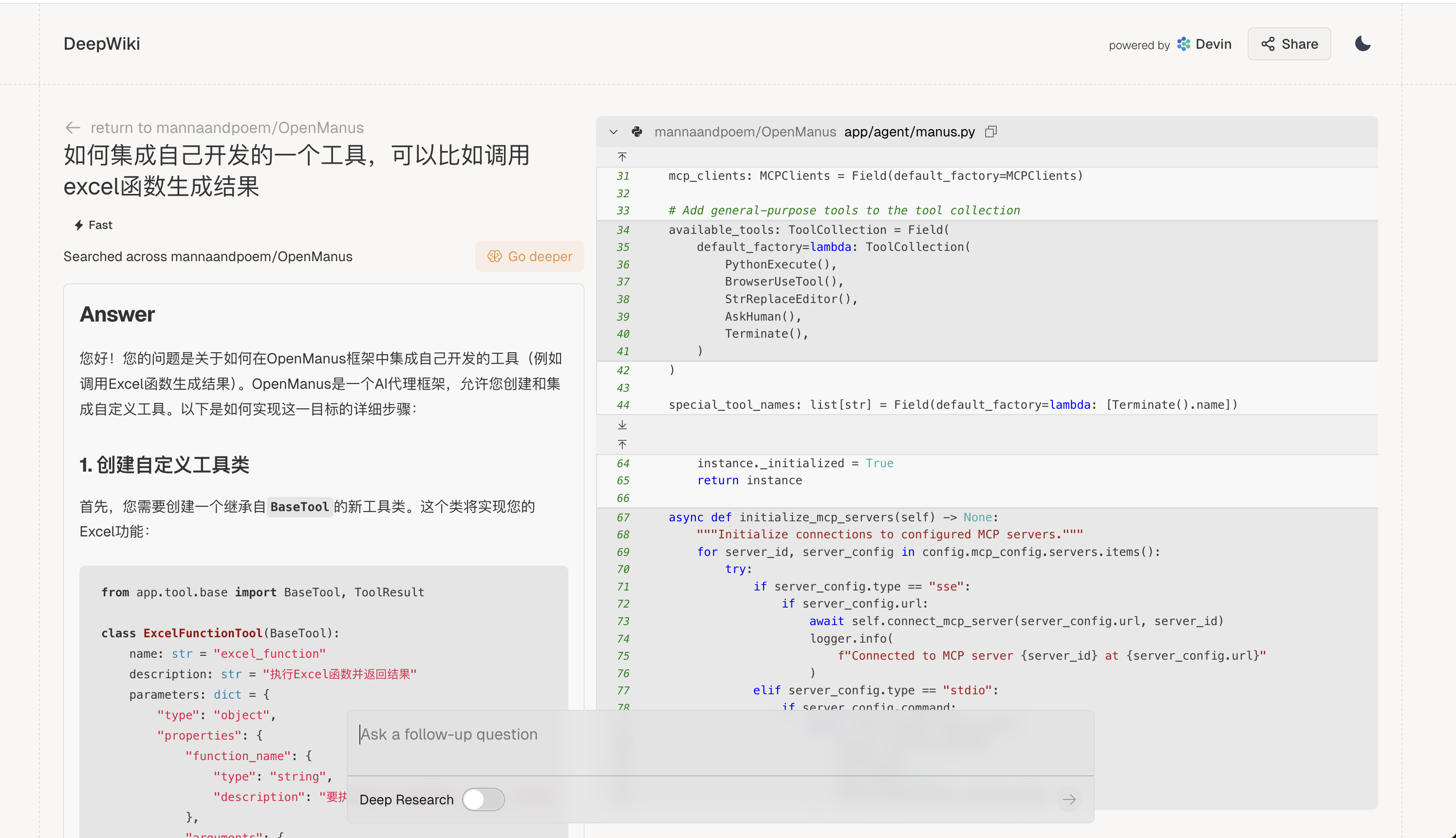

通过 api.weather.gov 来请求天气服务,给出结果

然后通过一个客户端来访问请求下1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23public static void main(String[] args) {

var stdioParams = ServerParameters.builder("java")

.args("-jar", "/Users/shixuesen/Downloads/mcp-demo/target/mcp-demo-0.0.1-SNAPSHOT.jar")

.build();

var stdioTransport = new StdioClientTransport(stdioParams);

var mcpClient = McpClient.sync(stdioTransport).build();

mcpClient.initialize();

// McpSchema.ListToolsResult toolsList = mcpClient.listTools();

McpSchema.CallToolResult weather = mcpClient.callTool(

new McpSchema.CallToolRequest("getWeatherForecastByLocation",

Map.of("latitude", "47.6062", "longitude", "-122.3321")));

System.out.println(weather.content());

McpSchema.CallToolResult alert = mcpClient.callTool(

new McpSchema.CallToolRequest("getAlerts", Map.of("state", "NY")));

mcpClient.closeGracefully();

}

这个就是实现了mcp的简单示例,几个问题,要注意java的多版本管理,我这边主要是用jdk1.8,切成 17需要对应改maven的配置等